Why We Launched Federated Detection at RSA 2026

Everyone talks about response. Faster response. Smarter response. Automated response. AI-assisted response. Meanwhile, the thing response depends on most is still stuck in a world of centralized log pipelines, manual rule engineering, and cost-driven compromises over what data gets kept in the first place.

That thing is detection.

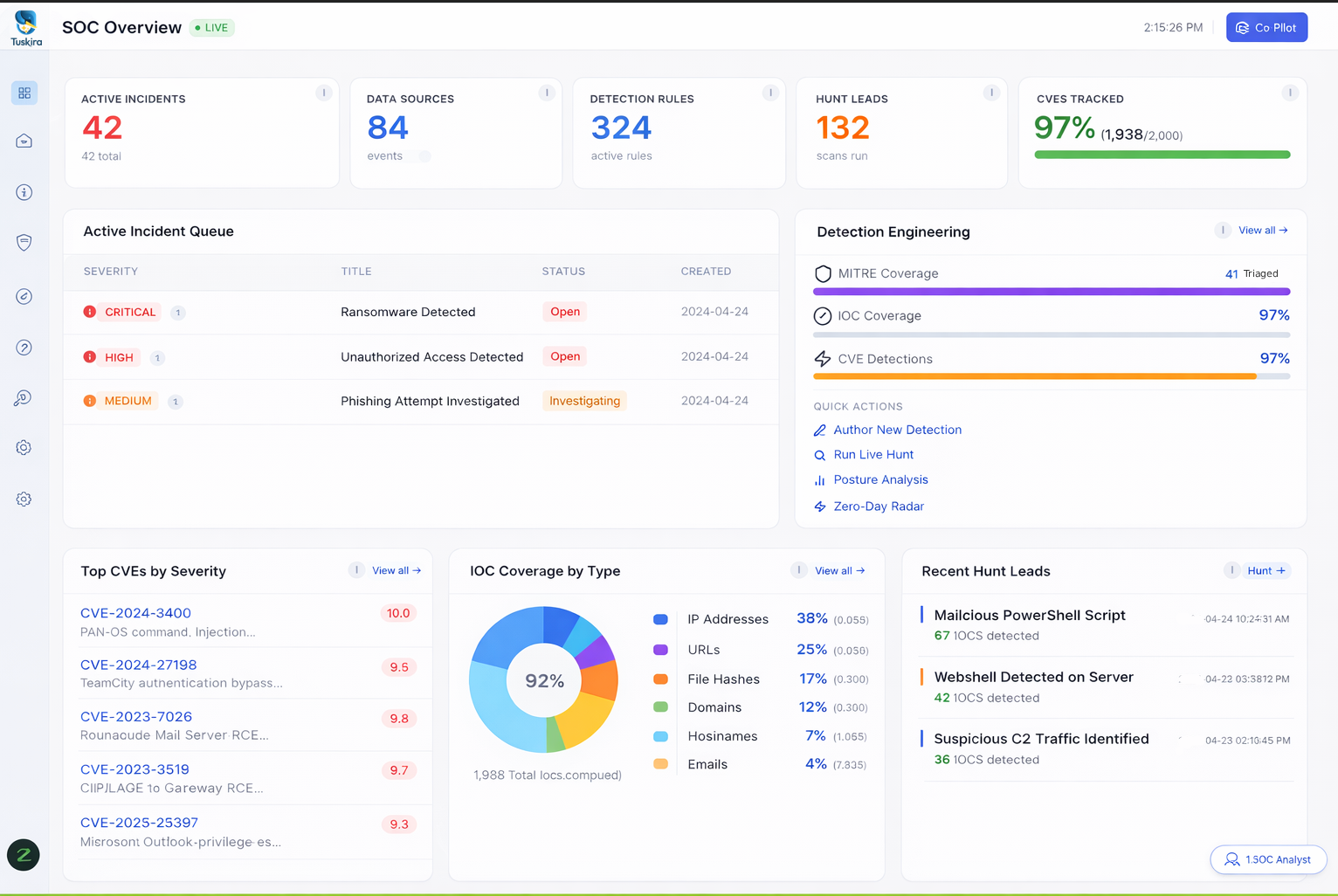

This week, at RSA 2026, we launched Federated Detection, a new capability in Tuskira’s Agentic SecOps platform. On paper, it means we can generate detections directly across distributed environments without forcing all raw logs into a centralized pipeline first. In practice, it means security teams no longer have to accept the old tradeoff that has quietly warped detection engineering for years: centralize everything and pay for it, or reduce what you ingest and lose both detection coverage and the context needed to understand how attacks actually move.

That tradeoff was always a losing proposition.

Detection broke before the SOC noticed

The market has spent years obsessing over alert fatigue, analyst burnout, and workflow automation, which is a bit like arguing over the ambulance's speed while ignoring the bridge that collapsed upstream.

The real problem is that detection engineering has become the bottleneck in security operations.

Attackers are moving faster across cloud, identity, endpoint, SaaS, network, and storage environments. Some are using AI to accelerate reconnaissance, attack chaining, and execution. Security teams, meanwhile, are often constrained by what their centralized architecture can ingest, normalize, store, and query, and that’s more an economics issue than just a tooling issue.

When log costs rise, teams reduce retention, granularity, sources, or scope. You’re no longer just managing data; you’re deciding what attacks you may never be able to see clearly enough to detect.

What we launched, really

Federated Detection changes the starting assumption.

Instead of moving raw telemetry into one place and hoping the pipeline is broad enough, fast enough, and cheap enough to support real detection coverage, Tuskira brings detection logic to where relevant data already lives.

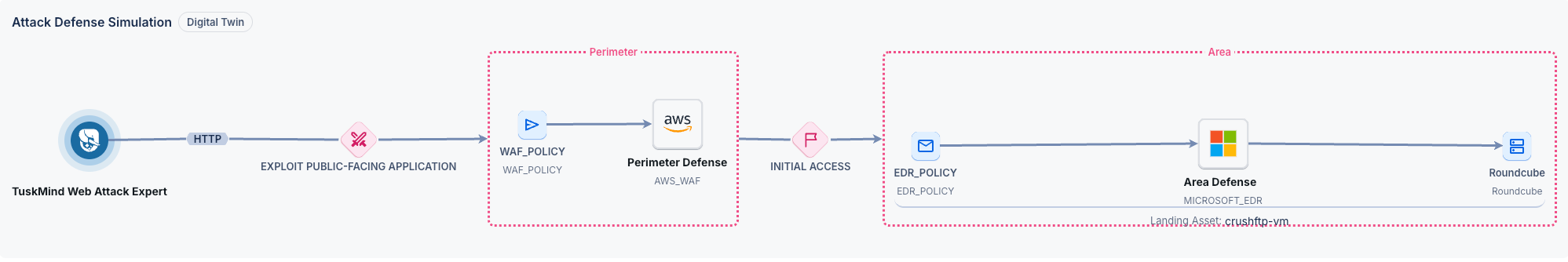

That means detections can be generated across distributed environments, then correlated through Tuskira’s Security Context Graph, where threat, asset, identity, and control context are connected into a shared model of attacker behavior and breach paths. From there, detections are validated through AI-driven triage and turned into operational outcomes without first making log centralization the price of admission.

This is a different operating model for detection, one that connects distributed detections to shared threat and control context across the SOC.

The old model was built for a slower world

The centralized model made a certain kind of sense when attacks moved slowly enough that human teams could write, tune, and maintain detection rules at a speed close to the threat's. That’s no longer the environment in which anyone operates.

Now the signals that matter are scattered across systems that don’t naturally cooperate, leaving analysts to reconstruct intrusion paths by hand across identity, endpoint, cloud, network, storage, and SIEM data.

That’s not a sustainable operating model. It’s a tax on detection quality.

Context is what turns detections into insight

A detection, by itself, is often just a suspicious event with good branding. What matters is whether it belongs to something larger.

And that context isn’t just threat context, it’s control context too. A useful detection is a signal placed inside a map of identities, assets, infrastructure relationships, compensating controls, and known breach paths, so the SOC can understand what’s fired, how an attacker is moving, what defenses already exist, where the real exposure lies, and what action is most likely to matter.

That’s why this launch is tied to a Security Context Graph. It’s where identities, assets, attacker behaviors, and infrastructure relationships are connected into a unified threat model, so the platform can surface breach paths, APT activity, and multi-stage attacks as connected realities rather than disconnected alerts.

Without that context, teams get more findings. With it, they get a better chance of understanding what those findings mean.

Hunting shouldn’t live in a separate universe

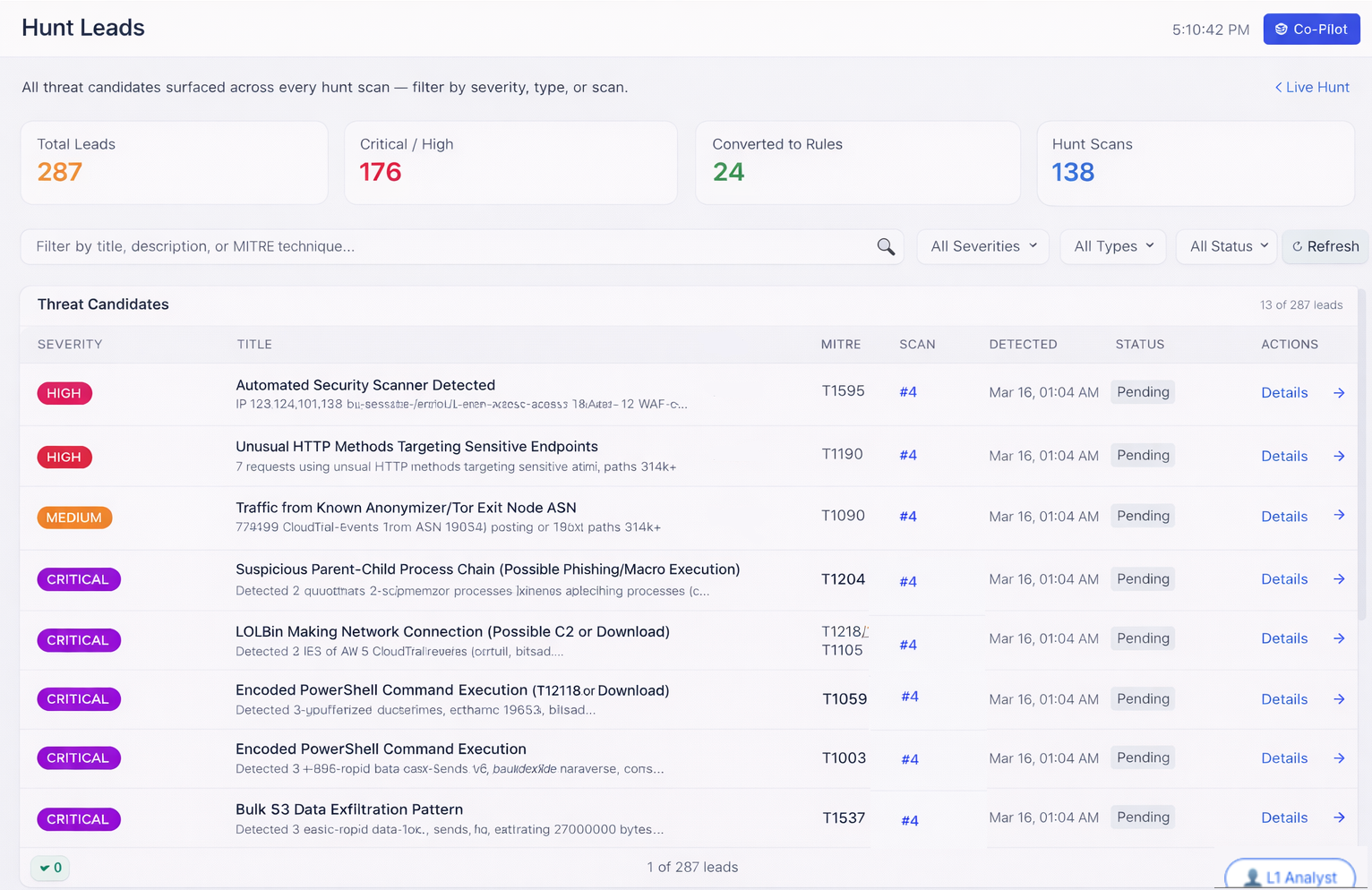

In a lot of security teams, threat hunting is treated like a side project for specialists, the sort of thing smart people do when there’s time, which is to say not often enough and rarely in a way that feeds back into the rest of the system.

That’s another way the old model breaks down.

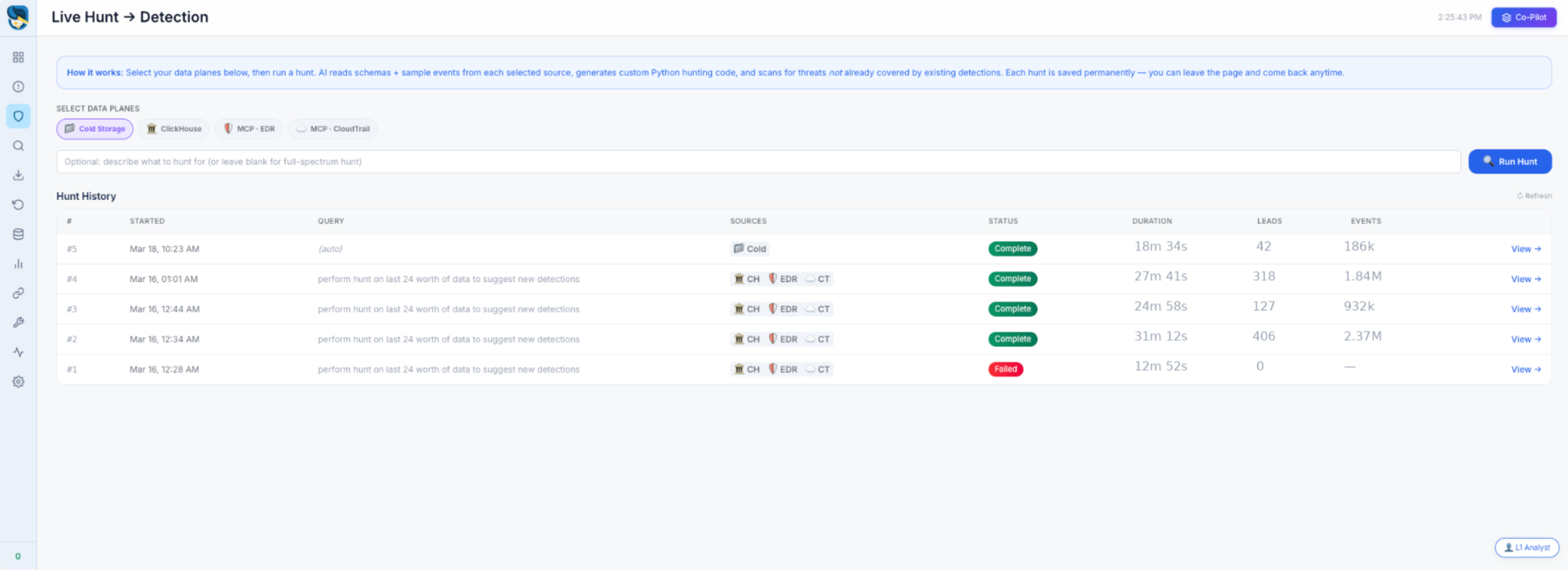

With Federated Detection, hunts can run across distributed data planes, surface new candidates, and feed directly into detection creation and refinement. That shortens the distance between “we found something interesting” and “we can now detect this reliably going forward.” Which is another way of saying the SOC gets a memory.

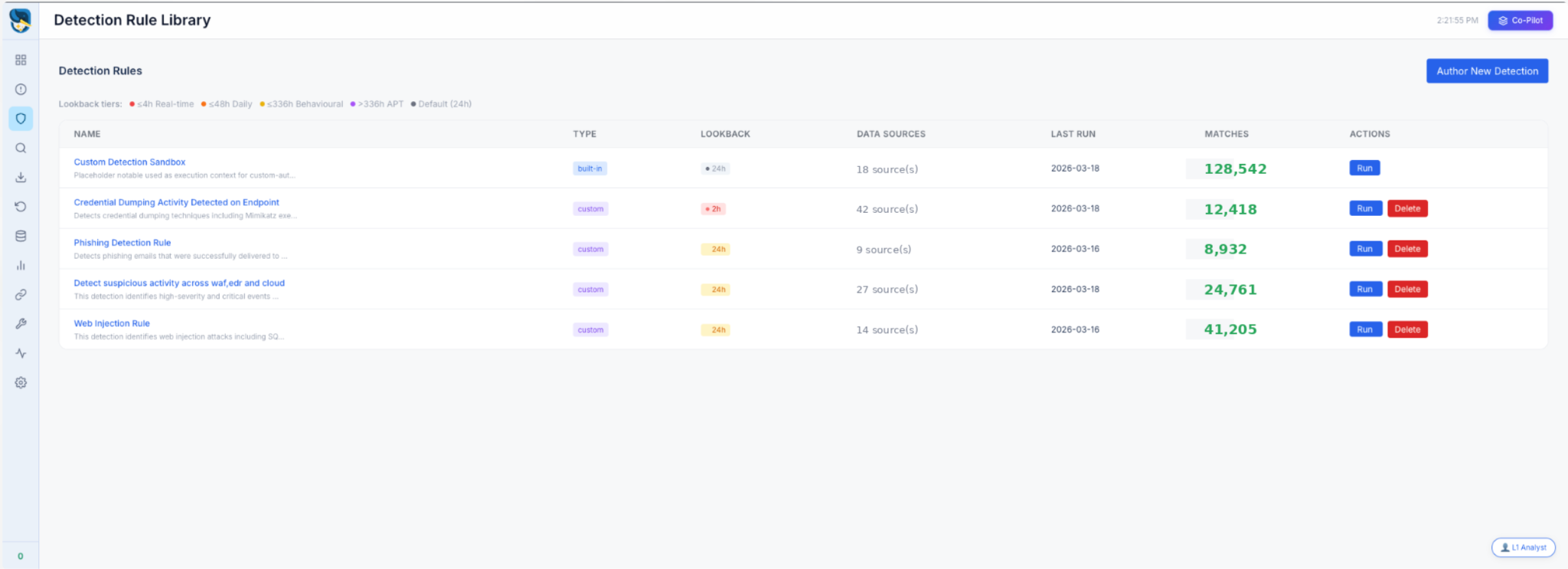

A rule library should not be a graveyard

Most detection libraries are archives of past conviction. Someone once believed these rules mattered. Some still do. Many don’t, but almost all require more maintenance than teams can realistically provide.

The point of Federated Detection isn’t simply to create more rules. It’s to make detection engineering a live system that can evolve faster, no longer trapped by the same centralization constraints and manual bottlenecks.

That’s why the launch matters to the larger Tuskira story. Better detections improve triage. Better triage improves investigation. Better investigation improves response. Better response creates better feedback.

The system starts to tighten instead of drift.

That’s also why Federated Detection isn’t a standalone detection feature. It is the front end of a broader SecOps loop spanning threat detection, triage, hunting, and containment. The detection layer matters because it feeds shared context into the rest of the platform, and the rest of the platform matters because it turns detections into investigations, breach-path visibility, and response actions that improve over time.

What this changes for security teams

In plain terms, Federated Detection gives teams a way to:

- Reduce dependence on full log centralization while preserving access to meaningful signals across distributed environments

- Improve visibility into multi-stage attacks by correlating detections through shared context instead of reconstructing timelines by hand

- Reduce false positives through continuous AI-driven validation, so analysts spend less time ruling out noise and more time on findings that matter

- Move faster from detection to investigation to containment by shrinking the gap between “we found something” and “we can act on it”

It shifts the economics of detection itself. This launch is about removing the architectural and cost constraints that have limited what many security teams could detect in the first place. If detection can’t keep up, nothing downstream really matters.

See it at RSA

Detection has always been the part of the problem nobody wanted to call unfixable. Centralize more, ingest more, pay more, and still accept that some attacks would fall through gaps you couldn't afford to close. Federated Detection is built on the premise that this tradeoff was never actually necessary.

If you're at RSA, come see it live at Booth #261 in Moscone South. We'll show how Tuskira generates detections across distributed environments, correlates them through shared context, validates them through AI triage, and tightens the operational loop across the SOC, starting from where the data actually lives.