JPMorgan Just Made the Case for a New Security Operating Model

On April 17, 2026, JPMorgan Chase published "Fortifying the enterprise: 10 actions to take now for AI-ready cyber resilience." It's worth reading in full. It’s also worth being honest about what it implies for the tools most security teams already own, and for the operating model sitting on top of them.

To clarify, this is one of the largest financial institutions on the planet publicly stating that AI is compressing the time from vulnerability discovery to exploitation, that patch cycles are exceeding most organizations' capacity for change, and that resilience now depends on asset context, reachability-aware prioritization, continuous scanning, compensating controls, exercised response plans, and AI-augmented defense. They were explicit that vulnerability data should be correlated with "asset criticality, application context and reachability, threat intelligence, and exploit availability" rather than "chasing volume indiscriminately." They were equally explicit that resilience is "proven in practice, not in documentation."

That's the operating model shift, and here's what it actually means for the stack you already have.

Three Failure Modes of the Current Stack

Most Fortune 500 security programs have somewhere between 60 and 130 security products deployed, and the problem is that the stack produces findings faster than the organization can validate them. We’re seeing three specific failure modes come up again and again.

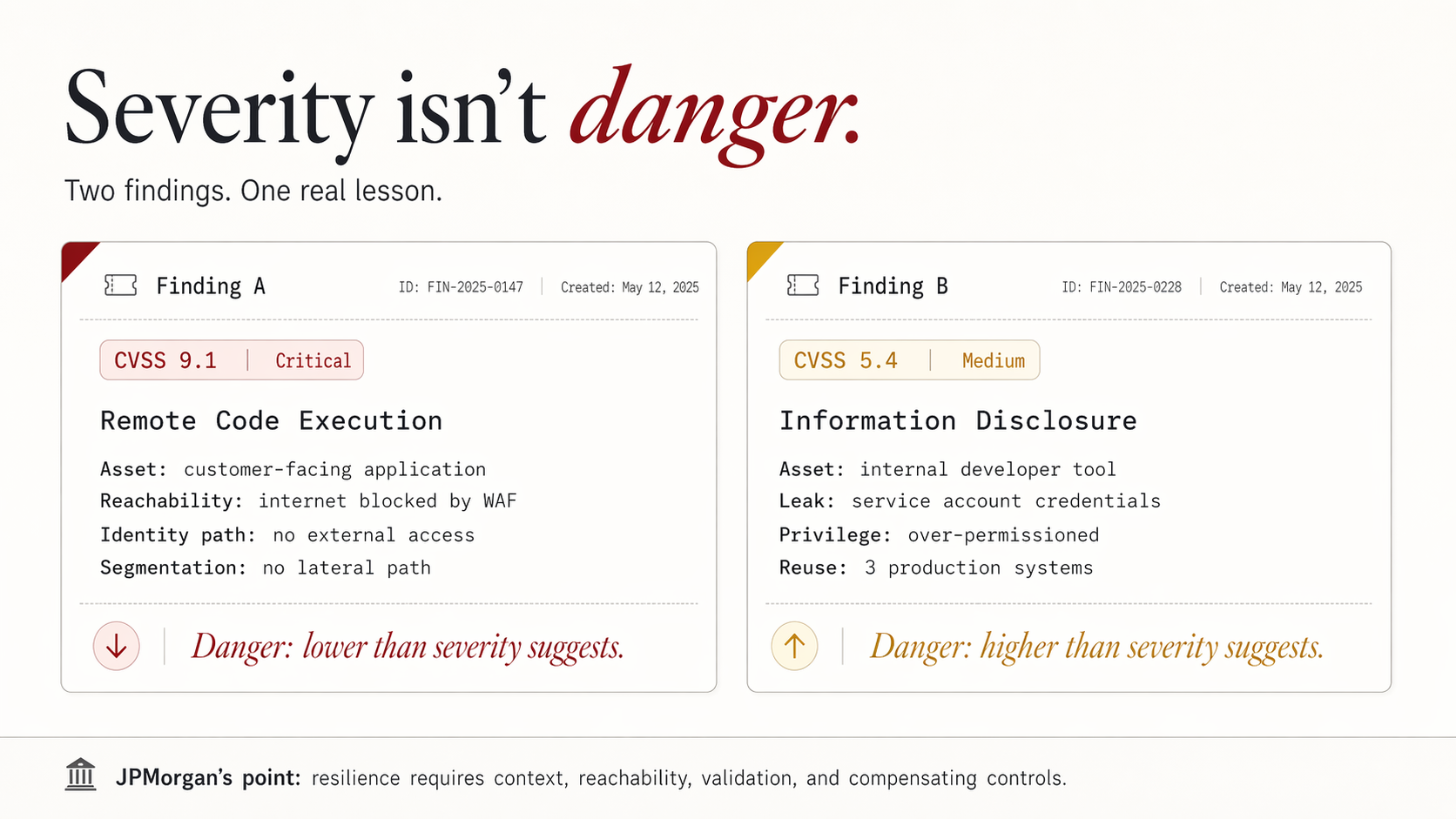

The vulnerability scanner treats severity as danger. A CVSS 9.8 on an internet-facing asset and a CVSS 9.8 on an air-gapped, segmented, WAF-protected internal workload show up identically in the queue. The scanner has no concept of reachability, no concept of compensating controls, and no concept of which identities can actually touch the asset. Teams triage by severity score and ticket age, which is a ranking almost uncorrelated with real breach potential. This is the exact dynamic JPMorgan warned against.

The CAASM or ASPM platform produces inventory without operational context. It knows what you have. It doesn't know what's dangerous. Asset ownership is frequently stale, business criticality is self-reported, and the "context" the platform adds is typically metadata rather than live telemetry. When an analyst asks, "Is this exposure reachable from the internet through an identity path that bypasses our segmentation?" the tool can't answer.

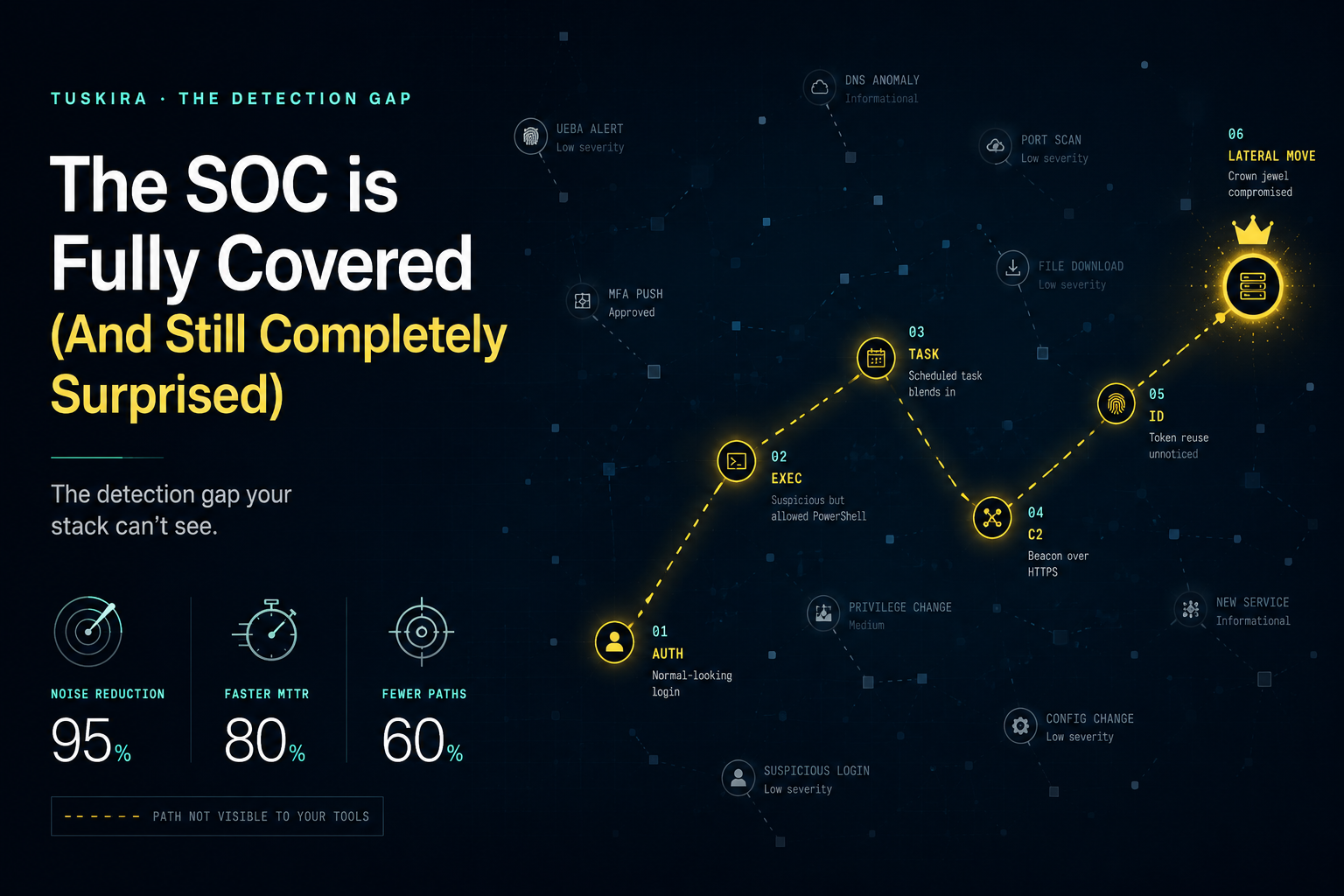

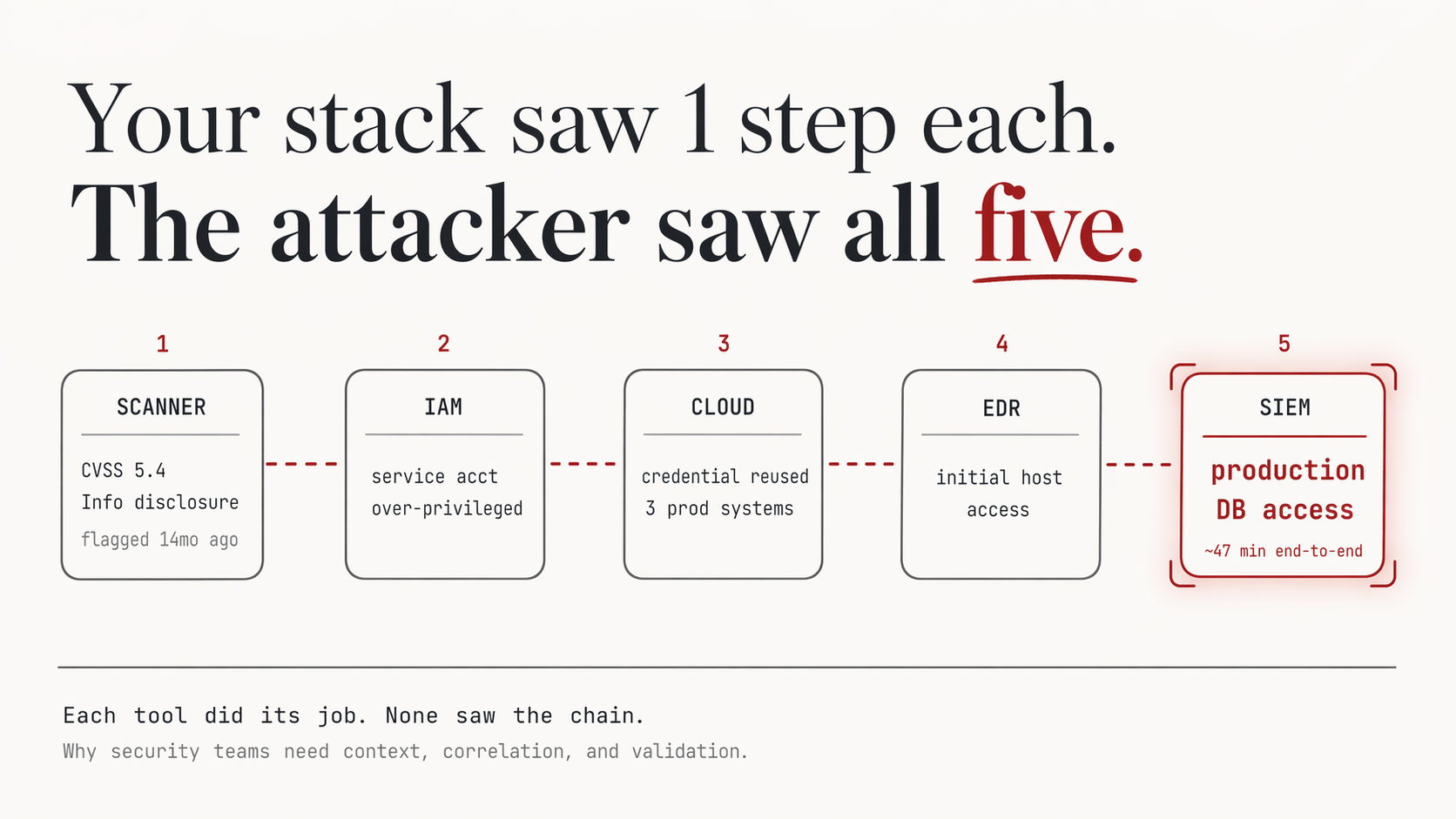

Detection, exposure, and identity tools operate in silos. The EDR sees process behavior. The SIEM sees logs. The vuln scanner sees CVEs. The IAM system sees entitlements. Correlating across them to answer "does this exposure plus this identity weakness plus this misconfiguration create a viable attack path?" is a human job done in spreadsheets, Jira tickets, and Slack threads.

JPMorgan's prescription is to answer "where are we exposed?" in minutes, not days, which is impossible in that operating model. Attackers, meanwhile, are using AI to traverse exactly those gaps.

None of this is a failure of any individual tool, as each does what it was designed to do. The failure is that the operating model on top of them hasn't changed in a decade.

Two Findings, One Real Lesson

Consider two findings from a recent engagement with a mid-market financial services firm.

Finding A: Critical (CVSS 9.1) remote code execution in a widely deployed web framework on a customer-facing application. The team was 48 hours into an emergency patch cycle, pulling engineers off roadmap work, when a deeper look at the environment showed the vulnerable endpoint was unreachable from the internet (protected by a WAF rule deployed six months earlier for an unrelated compliance finding), required authenticated access that no external identity possessed, and sat behind a network segment with no lateral paths to sensitive data. The finding was real. The danger was materially lower than the severity score suggested. Resources should have been allocated on a normal patch cadence, not on a fire-drill basis.

Finding B: Medium (CVSS 5.4) information disclosure in an internal developer tool. It had been in the backlog for fourteen months. No one had prioritized it. Walking the environment, the exposure turned out to leak service account credentials that were over-privileged, reused across three production systems, and not rotated in more than a year. The "medium" finding was a functional domain compromise waiting to happen, an attack path a competent adversary could execute in under an hour once they had initial access. It should have been a P0 the day it was filed.

This is the gap. Severity scores, measured in isolation, misranks risk in both directions. JPMorgan's call for correlation across asset criticality, application context, reachability, and exploit availability is the only way to close it.

Where Tuskira Fits in JPMorgan's Prescription

Tuskira is a full-stack agentic secops platform. It’s fun to say three times fast, but the honest way to describe us is that we sit above the tools you already have (your scanners, CAASM, EDR, SIEM, IAM, cloud security platforms) and turn their fragmented output into a single operational view of real exposure, real attack paths, and real defensive readiness. We're not replacing your scanner or your EDR. We're replacing the manual correlation work that currently happens between them, the static triage queues that result from treating every finding as equal, and the weeks-long gap between a new threat emerging and a deployed cross-source detection.

Concretely, here's how that maps to what JPMorgan is asking for.

"Answer where are we exposed? in minutes, not days." Tuskira operates on a federation model rather than a data-lake model. Alerts and telemetry stay in the systems you already own (Splunk, Sentinel, your EDR, your cloud-native logs) and are queried in place through MCP connectors. There's no re-ingestion tax and no duplicate storage bill. When an analyst asks where you're exposed to a new CVE, the answer comes from live queries across the stack, not from a stale index rebuilt nightly.

"Correlate vulnerability data with asset criticality, application context, reachability, threat intelligence, and exploit availability." This is the core of what the platform does. Findings aren't treated as isolated records. They're analyzed in context, so where the asset sits, what it connects to, whether it's reachable, what identities can touch it, what threat signals surround it, and what controls already reduce the risk. The output is a ranked view of exploitable exposure, with the reasoning exposed.

"Use perimeter controls to mitigate exposure while you fix vulnerable software." When a patch isn't available or isn't feasible on the threat timeline, Tuskira surfaces which compensating controls (WAF rules, segmentation boundaries, identity tightening, detection tuning) can materially reduce risk right now, and validates whether they're actually doing so against the specific attack paths that exposure creates. In most programs today, it's manual and episodic.

"Resilience is proven in practice, not in documentation." Tuskira's investigation framework continuously tests whether the controls you believe are protecting you actually interrupt the attack paths those exposures create. An exposure that looks dangerous on paper but is buffered by a verified control is ranked accordingly. An exposure that looks mild but creates a viable path (Finding B in the example above) is surfaced with the path made explicit.

"Invest in AI-augmented defensive capabilities to match the pace of the threat." Tuskira's L1 triage agent produces verdicts with confidence scores and a full reasoning trace. Confirmed cases auto-escalate to an L2 investigation agent that builds a MITRE-mapped attack timeline, blast-radius analysis, and tiered response recommendations. Containment actions are architecturally human-in-the-loop (the agent recommends, the analyst approves), which is the right posture for the failure mode JPMorgan is worried about. Investigation runs at machine speed; consequential action stays under human judgment.

The category is Agentic SecOps with an exposure-management and defense-validation core. What it displaces in practice is the manual correlation workflows, the static risk-scoring spreadsheets, the siloed triage queues, and the quarterly red-team reports that are stale the day they're delivered. It coexists with the detection, prevention, and scanning layers rather than competing with them.

The Question for Security Leaders

The useful question isn't "do we have the right tools?" Most Fortune 500 programs do. The useful question is whether the operating model on top of those tools can answer, in minutes rather than weeks:

- Which of our current exposures are actually reachable?

- Which are buffered by controls that are verifiably working?

- Which create realistic attack paths to material systems?

- And where is analyst time being spent on noise rather than on things that would stop a breach?

If those questions have clear answers in your program today, you're ahead of most of the industry. If they don't, and JPMorgan's article is a reasonable yardstick for what "ahead" now means, well then that's the conversation worth having.