Your SIEM Is Costing You the Fight

The log-centralization model wasn't built to stop modern attacks. It was built for a different era, and attackers have already moved on.

The math was never in your favor. You pay to ingest logs. You pay to store them. You pay analysts to write detection rules against them. Then you pay again when attackers find the gaps between those rules.

That's the SIEM bargain. And most security teams have been living with it for so long they've stopped asking whether it's worth it.

SIEMs Were Built for a World That No Longer Exists

When SIEM became the center of enterprise security operations, the threat model was simpler. Perimeters were real. Attacks came from outside, moved in one direction, and left evidence in logs you could actually collect.

Today's environment is distributed by design. Identity lives in Okta or Azure AD. Workloads run in AWS and GCP. Endpoints are everywhere. Network traffic moves across providers you don't fully control. And attackers know exactly how to exploit the gaps between all of it.

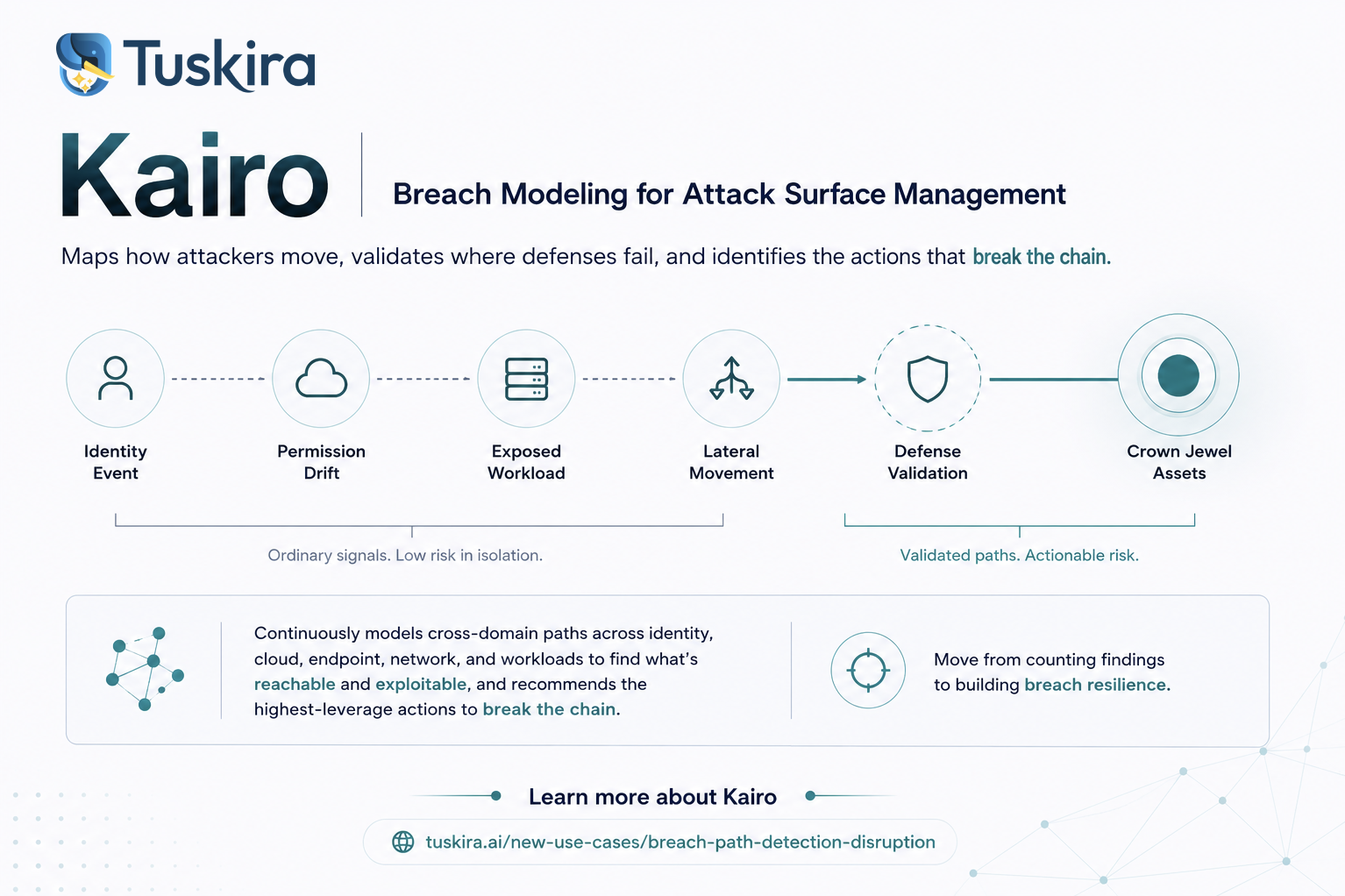

Modern breaches are coordinated progressions. An attacker compromises an identity, moves laterally through cloud infrastructure, pivots to an endpoint, and exfiltrates data. The full picture only becomes visible when you can correlate across all of it simultaneously, not after the fact, but in motion.

SIEMs were designed to ingest and search for data. Not to reason across a distributed environment in real time.

The Centralization Tax Is Getting Worse

Log volumes are growing faster than any organization's willingness to ingest them. The average enterprise now routes a fraction of its actual telemetry into its SIEM because full coverage is prohibitively expensive.

So security teams make choices. They triage what they send. They optimize for "important" logs. They write detection rules against incomplete data and wonder why things still get missed.

Here's the structural failure: centralization creates a bottleneck at exactly the moment when speed matters most. By the time a log event travels from an endpoint through a collector, through a pipeline, into a SIEM, and against a detection rule, attackers have already moved. That's not a latency problem you can engineer your way out of. It's an architectural one.

You're reasoning about the past, while attackers are operating in the present.

Static Rules Don't Catch Dynamic Attackers

The other problem with SIEM-centric detection is the rulebook.

Detection rules are written by humans, tuned over time, and validated against known attack patterns. That works in a threat landscape that doesn't evolve, but modern attacker TTPs shift faster than most teams can update their Sigma rules.

The result is a detection posture that's perpetually behind because rules get written after incidents, coverage gaps get discovered after breaches, and the same attack can land twice because the feedback loop between detection and response is too slow, too manual, and too dependent on individual analyst expertise.

After every breach, the same gaps remain because defenses don't compound, and you have a system design problem.

The Queue Metaphor Is the Tell

If you want to understand why security operations keep losing ground, look at how work actually flows through a SOC.

Alert. Ticket. Analyst. Triage. Escalation. Remediation.

That's a queue. It's optimized for throughput and closure rates. It measures success in MTTD and MTTR. It rewards analysts who close tickets fast rather than those who understand attacker behavior deeply.

Attackers don't operate through queues however, they operate through paths. They move across identity, cloud, endpoint, and network as a coordinated progression, exploiting gaps between your tools, data silos, and detection coverage. They reason across your entire environment, while you reason in isolated products.

The queue model was never built to catch a coordinated path. It was built to process discrete alerts. The gap between those two things is where most breaches live.

Some organizations have tried to fix this by partnering with autonomous AI SOC vendors, platforms that apply machine-driven triage to compress alert volume, automate L1 and L2 investigation, and reduce the burden on human analysts. That's a real capability, and in the right context, it meaningfully changes how fast a SOC can operate. Analysts spend less time on routine noise. Response times improve. The queue moves faster.

But here's what faster queue processing doesn't solve: detection gaps. If your underlying detection architecture is missing coverage across cloud, identity, or network telemetry, if your rules are stale, your log sources are incomplete, or your visibility is siloed by tool, then an AI triage layer is just accelerating decisions made on incomplete information. You're not closing breaches faster. You're closing tickets faster, and those aren’t the same thing. Autonomous triage built on top of fragmented detection doesn't eliminate the structural problem.

What the Alternative Actually Looks Like

Fixing this isn't about replacing your SIEM with a bigger SIEM. It's about changing the architecture of detection.

Detect at the source, not after centralization. Generate detections directly at the EDR, the firewall, the S3 bucket, the identity provider, and federate intelligence across those sources without moving all the data. Trade ingestion cost for detection coverage. Trade centralized storage for distributed reasoning.

Build a context graph, not a log repository. Maintain a unified model of your identity, endpoint, cloud, and network environment, and reason across it continuously. When an attacker moves from a compromised identity to a cloud workload to a lateral movement path, you see it as a connected path, not as three separate alerts with no shared context.

Apply AI triage, not human queue management. Compress alert volume by continuously validating what represents real breach risk. Let analysts spend time on decisions that matter, not on tickets that don't.

Build a learning loop, not a static rulebook. Every detection, every investigation, every containment action should feed back into the system. Detections improve. Rules evolve. The same attack doesn't land twice.

The SIEM Won't Disappear Overnight

This isn't an argument for ripping out infrastructure that's taken years to build. SIEMs still serve massive functions, such as compliance logging, historical forensics, and regulatory reporting. They're not going away.

But if your detection strategy is SIEM-first, you're fighting a path-based war with queue-based tools. And you're paying a centralization tax every month for the privilege.

The question isn't whether to augment your existing stack. It's whether your architecture can reason about attacker behavior the way attackers actually behave, across your entire environment, in motion, as a connected path.

That answer has to be yes.